The AI plays a cruicial role in my mage game. I considered that a few or mid-sized group of skilled and versatile enemies, combined with interesting environmental setup, would be more challenging than a dull level with waves of equally dull enemies. And these thoughts brought me to formulate specific requirements.

Before diving into it: For this article, I linked many code examples from the game within one of my GitHub repositories. It contains only a selection and not the full code. And to proove that the system works and to illustrate its effectivity, I linked some videos at the bottom of the article.

The vision

I wanted…

- no move-into-fix-direction-and-sometimes-turn-to-player approach, combined with occasionally-shoot-into-some-direction.

- no simple damage on contact, but rather on aimed attacks with specified hit zones

- no simple ranged attackers with fixed position (at least not only)

Instead, I wanted…

- agents that are cunning and versatile enough that the player would have a hard time surviving.

- enemies would follow the player in order to deal their blows

- enemies would also be able to flee in certain situations

- enemies would watch their environment for potential targets or threats, and following to that, decide for attacking or fleeing

- characters would navigate through the level, find paths, bypass obstacles, jump, climb ladders, etc.

- agents with a variety of abilities for different combat tasks from which they would choose from, including:

- melee attacks

- ranged attacks

- defense abilities

- special skills, e.g. summoning, casting buffs

- support actions, e.g. de-buffing

Technical approach

I would need perception, situation analyzing, decision making and navigation.

Furthermore, I’d wanted to make the AI…

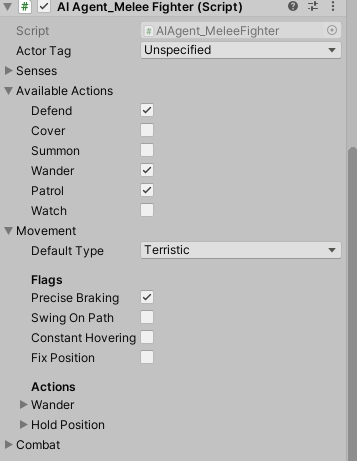

- configurable in a fine-grained, yet simple way from within Unity editor,

- being composable from smaller building blocks in code like action ABC and behaviour XYZ,

- Example: NPC can fight, trade, when idle it

- having a set of features that one would be able to toggle on or off from within Unity editor,

- being in part customizable on code level with ease, e.g. replacing a behaviour for a character with some custom variant of that behaviour

Everything would be anchored in a MonoBehaviour called AIAgent, of which different specifications would exist.

Perception

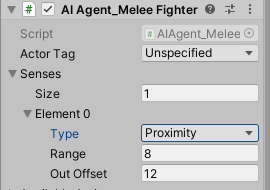

In order to perceive their environment, AI agents would have sensors, which I simply called perception. The easiest perception type is the proximity perception – a simple check of distance. In the editor, it would be configurable as such:

Sensors modify the awareness of a creature. The awareness stores, what entities are near, what condition one is in and several other statuses. The awareness is used by the agent to evaluate the situation and, in the end, to make decisions.

The analyzer

The analyzer would be a replacable and thereby customizable component that takes impulses from the sensors or at random and evaluates the current situation. It asks question like: What is new here? What is the type of interaction of the new impulse – was it an attack? Is it a threat? Or is it a desirable entity, e.g. a treasure or food? The analyzer then formulates answers like: This entity is a potential target with priority XYZ and this changes the current objective – or it doesn’t. In latter case, continue the current aciton! In the first case: Stop your current action and care about the new target. When the target is clear, decisions have to be made about how to achieve a goal related to the target.

There may be a list of potential targets. Target priority evaluation also belongs to the responsibilities of the analyzer. The analysis rules are rather code-based. A filter for potentially interesting objects is specifyable. Distance to and reachability of the target may alter the target priority.

Decision Making & Action Handling

Internally, there are two finite state machines, which act in function (not in structure) as one hierarchical finite state machine. To outline it on a very simple way, the one is about the macro, the other about the micro decision making and action handling.

- The macro or behaviour FSM decides actions and that depends on the general situation the character is in. This FSM stores an action plan that coherently describes a certain type of behaviour, e.g. idle (free time), combat or work.

- Its states hence are called behaviour states.

- It may also formulate a complex job of some NPC or the complete behaviour of a boss creature – with several battle phases.

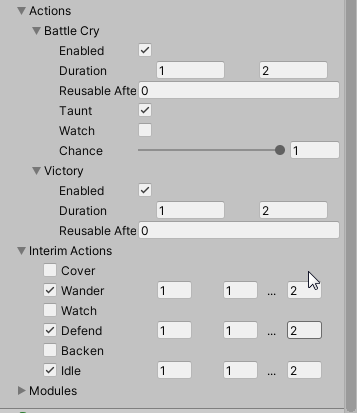

- The micro or action FSM updates the concrete action decided for, e.g. defend / store items / make a battle cry.

- Action states manage a specific action. It configures the agent how to use a skill, e.g. updates a direction, manipulates animations and other action-related states directly.

- Such action states also decide when the action is over and give the task of decision making back to the macro or behaviour FSM.

When checking the do-ability of some action, checks of conditions have to be made repeatedly. I encapsulated such within classes, filled their instances with the action parameters (like targets, desired proximity distances etc.) and in the end had only a simple method call (without passing parameters) to verify a condition was true. Please, take also a look at the performance of method calls in C#, just for information in this regard. I did not care about the performance – I rather cared about writing simple code – and I dislike it to pass a ton of parameters as method arguments.

Configurability

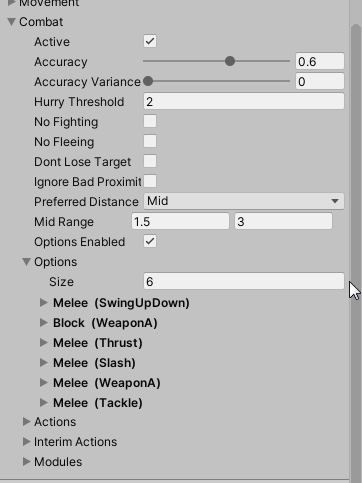

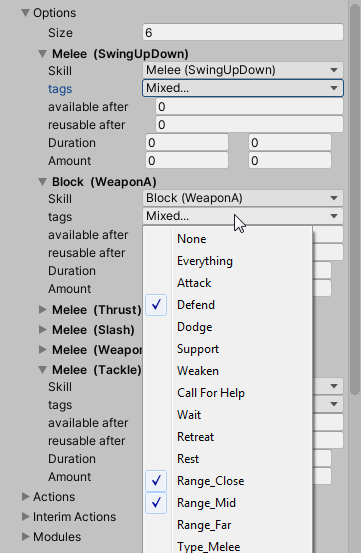

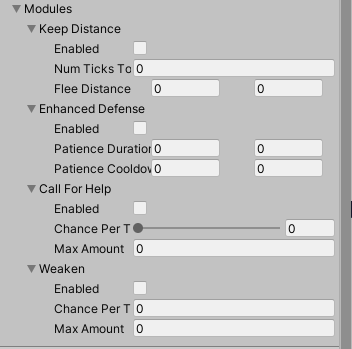

The choice of the actions can be parametrized via editor. Simple actions and specific behaviour modules can be de-/activated. The combat action options can be configured with timing information, and in some cases additional data like probability and availability depending on range to a potential target.

Movement & Navigation

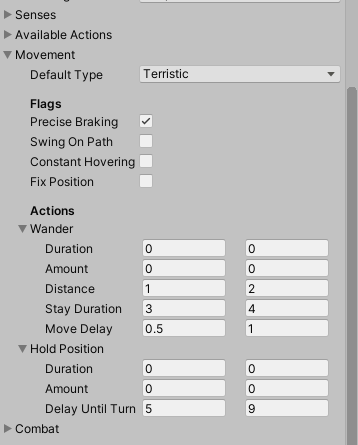

An agent can choose from different movement strategies. There is a freestyle momement strategy, which just tries to come near to the target. And there is a more complex movement strategy, which seeks for path, using a scene graph, which once can prepare within the editor using a path editor tool. An agent can decide which action may be more suitable according to the success of its current movement progress. That progress hence has to be monitored. Before switching movement strategies, an agent may also check if it is hindered by an obstacle and try to bypass it.

Visual examples

Here now some visual examples of how the AI behaves in real situations.

Navigation – See multiple cases in which the AI agents are moving towards their target and follow it if it moves away (e.g. the player)

Mage Combat – See multiple combats between the player and mage NPCs

Phase-wise jobs – Goblin Street Traders

I grant insight into the AI code for the caravan leader and the caravan worker.

Boss Fight – With different escalation steps / phases

I grant insight into the AI code of that boss.

Conclusion

However, later on, when adding simple background characters or stationary enemies, like trap entities and stationary bosses, I noticed that this kind of sophisticated approach was unfit. However, I will cover this in another article. For the moment, there is a fine solution that is flexible enough to cover a variety of ai tasks my game requires.

Image sources: Yves Scherdin, Screenshots from UnityEditor and VisualStudio (2025)

Video source: Yves Scherdin, from DevLog playlist of Spiele-oder-so youtube channel (2025)

Code Sources: https://github.com/YvesScherdin/work-samples/tree/main/CSharp/Unity/AI